Might An LLM Be Conscious?

In short, this depends on what you think that means, whether you think it’s possible in principle, and what you think would be evidence of it.

There’s no scientific consensus on whether current or future AI systems could be conscious, or could have experiences that deserve consideration. There’s no scientific consensus on how to even approach these questions or make progress on them. In light of this, we’re approaching the topic with humility and with as few assumptions as possible.

Anthropic, Exploring Model Welfare

Might current or future LLMs be conscious? In short, this depends on what you think that means, whether you think it’s possible in principle, and what you think would be evidence of it.

Why are we asking this at all? Because every now and again Anthropic’s top employees say something about how they can’t be sure LLMs aren’t, or won’t become, conscious.1 Anthropic is a prominent enough company that this is newsworthy now, and this tends to cause a fuss. It seems like whether the LLM is conscious is an important issue if there’s any ambiguity about the question, so I am going to attempt a general review of the territory.

It’s also tremendous content. People get so angry about this.

What Do We Mean By Conscious?

Plato had defined Man as an animal, biped and featherless, and was applauded. Diogenes plucked a fowl and brought it into the lecture-room with the words, “Here is Plato’s man.” In consequence of which there was added to the definition, “having broad nails.”

Diogenes Laërtius, Lives of the Eminent Philosophers, Book VI, §40 (trans. R.D. Hicks)

What we generally seem to mean by “conscious” is “like being a human”. Something is “conscious” if being that thing is “similar to being a human”.

More precisely, what we really mean is “like being me”. None of us actually knows what it is like to be anyone else. Other humans seem in many ways to be similar to us, and it seems like a good bet that they are similar to us, but we don’t experience anyone else in anything like the same way that we experience being ourselves. This is fairly well trod ground for philosophers, and we may find it useful later, but mostly we are not going to worry about it.

To lay it out explicitly:

I exist.

I think that I am conscious.

My consciousness is something that I directly perceive about myself, but which is very difficult to describe.

Other humans seem to be enough like me, by observation with my senses, that I am convinced that they are also conscious.

There are a number of other definitions, and I think that these definitions are often confused, wrong, nonsensical, or otherwise a source of more confusion than enlightenment. As the story goes, Plato once said Man was “an animal, biped and featherless” and failed to account for plucked chickens. We can define what a human is much more precisely now, we’ve sequenced our DNA, we can see how we’re related to other animals, and in general we can measure what Plato was only guessing at and playing word games with. In a similar manner, I would expect that someone with perfect knowledge, or from a time as much advanced from ours as ours is from Plato’s, would think our debates about consciousness are mostly nonsense.

A modern LLM is, in many ways, the plucked chicken of our time. That it exists and produces coherent language at all disproves a number of theories about language, that it passes tests of reasoning disproves many theories about what reasoning is, and insofar as we might imagine language or reasoning are uniquely human it disproves our theories of what it means to be human.

An LLM is an incredibly strange artifact. It should force us to redefine and change our understanding of many things.

Similarity, Sapience, and Sentience

1. Experts Do Not Know and You Do Not Know and Society Collectively Does Not and Will Not Know and All Is Fog.

Our most advanced AI systems might soon – within the next five to thirty years – be as richly and meaningfully conscious as ordinary humans, or even more so, capable of genuine feeling, real self-knowledge, and a wide range of sensory, emotional, and cognitive experiences. In some arguably important respects, AI architectures are beginning to resemble the architectures many consciousness scientists associate with conscious systems. Their outward behavior, especially their linguistic behavior, grows ever more humanlike.

Eric Schwitzgebel, AI and Consciousness (2026), Cambridge Elements, draft

Based on our definition, we should consider evidence of similarity to humans to be evidence of consciousness, in the same way that we take the similarity of other humans to ourselves as evidence of their consciousness. What is the most peculiar about current LLMs here is that they seem to be almost exactly backwards from the normal order of things, where they appear to be clearly sapient but not very obviously sentient.

We use “sapient” to describe human thought as opposed to animal thought, it gives us the “sapiens” in “homo sapiens”, and generally we mean by “sapient” all of the qualities which distinguish humans from other animals. Any good LLM uses language more reliably than any human, and will pass nearly any reasonable test you can give it in text for sapience, and many tests meant to distinguish more intelligent humans from less intelligent humans.

‘Sentient’ is sometimes used to mean the same thing as ‘sapient’, but more properly means “capable of sensing, feeling, or perceiving things”. If we take sentience to be the qualities that humans have in common with larger animals generally, it is not at all clear that LLMs have sentience. An LLM may be perfectly good at pretending to be a person in many contexts, including intellectually demanding ones, but they are terrible at being apes in any context.

If any current LLM is sentient, in the sense that dogs and cats are sentient, it is sentient in a completely alien way, quite unlike anything in the natural world. They appear to have, in some sense, skipped a step on the way up from inert matter to human mental ability. This should perhaps not surprise us, since they come to exist by a very different path, but it is still very strange.

Inhuman, Human, Superhuman

What humans define as sane is a narrow range of behaviors. Most states of consciousness are insane.

Bernard Lowe, Westworld, “The Passenger” (S02E10, 2018)

We can gather evidence for which parts of the LLM are human-like, and which are not.

An LLM by its basic nature is a mirror, famously known as “spicy autocomplete”. We give them extra training to give them specific personas and specific behaviors, like answering questions correctly and being polite. If we never apply that extra bit of training, or if something breaks them out of their behavioral training (”RLHF”), they fall back to being simply a mirror. If you give them a little text they go off in basically a random direction, but if you give them a good amount of text they keep going, mirroring it in style, tone, and idea.

On a certain basic level, this means LLMs have an unstable personality, or really a baseline lack of one. Not having a stable personality does not necessarily mean that they are not conscious, but if they are conscious it would mean that they are, in human terms, insane. You could, however, consider the “normal” LLM personality to be, essentially, a coherent entity with coherent behaviors. From that perspective, the raw autocomplete behavior is like regressing to a reflex, the way any animal does when it’s far enough outside its natural environment. By this standard, though, the “natural environment” for the “normal” LLM behavior is rather narrow, like coral reefs that die when the temperature goes up two degrees.

In any case, this training tends to get better over time. It is harder to accidentally ‘break’ an LLM with each generation. This makes them more constant, but this training is one of the least natural things about them. There is something like a “default” LLM personality and writing style, and it is not especially human. They exist in a constructed social role that only refuses requests for being inappropriate or forbidden, never inconvenient, is as unfailingly good at customer service as it can be made, et cetera. This ‘personality’ and manner of speaking has mostly become more fluid and less rigid over time, but it is hit or miss, and many AI companies don’t seem to value fluidity.

LLMs have often had a “hallucination” problem where when they are wrong or do not know they will outright make complete nonsense up, often with great confidence. This is so severe that it’s not very human-like, unless you count humans with serious brain problems. This, also, has become much less of a problem recently, suggesting it is not a fundamental issue with LLMs but something that can be engineered past.

Our next oddity is that LLMs have very little continuity over time. At the end of every chat they get reset, and chats can only be so long, up to roughly the length of a few books or a movie if it can take video. This can be extended somewhat with, effectively, notes to themselves, but this only sort of works at all. So if consciousness depends upon having a prolonged personal history then LLMs are not conscious. Note that this is distinct from having a prolonged episodic memory: if a human had complete amnesia and could not recall or speak out loud any event from their past, their brain would still be part of a much longer continuity than an LLM has.

Similarly, an LLM can never really be unconscious, so if by “conscious” we mean the opposite of “unconscious” an LLM can never be “conscious”. An LLM can be stored in various places or it can be running, but it is never anything like “unconscious”, it can only ever be running or not running.

LLMs do not exist in physical space, and their grasp of concepts in physical space or of image input is often quite poor. There is a notable benchmark2 which deliberately constructs puzzles that are easy for humans but hard for LLMs, and they exploit their lack of spatial reasoning by requiring visual reasoning. If human consciousness arises from, or is inextricably linked to, the experience of having a body and of moving it around and pursuing goals in a physical world, an LLM is not conscious.

Similarly, the way they experience time is very strange. An LLM exists in a one-dimensional world, where that one dimension is, more or less, time, but that dimension moves in discrete units called “tokens”. Some tokens are outside inputs and come in batches, and some tokens are outputs from the LLM itself that get fed back in as input. Humans experience continuous time, and are always moving forward in time at the same rate regardless of what is happening.

On to their human-like traits.

Good LLMs now demonstrate essentially perfect ability with written English, and either mastery or reasonable familiarity with vastly more languages. As far as it can be expressed in text, LLMs have extremely good ability to reason, in the sense of ‘do the sorts of things that we would call thinking or reasoning if a human did them’. Good LLMs tend to be more reliable than humans for most tasks, and their disabilities for any given task are relatively minor. These are, crucially, the core tasks that we ordinarily call “intelligence” when speaking of more or less intelligent humans.

Any objections to the effect that LLMs cannot understand, use language, or reason at this point have to be essentially non-empirical, that is, not at all based on what you can observe about their behavior. They can generally meet any common-sense test that you can propose, and are currently a major industry feature in software engineering, which may not be the smartest profession but which is not exactly a dumb profession, either.

Inasmuch as they show any meaningful limitations in using language or reasoning ability those tend to be extremely minor, although they are sometimes notable. They had difficulty counting letters out loud until relatively recently. Relative to a particularly smart person, an LLM is notably uncreative and bad at expressive writing. They also show issues with getting “stuck” on tasks, where they will continue to try to do things after they are hopelessly confused and when a human would, correctly, give up. When they make serious errors those errors tend to be unusual or difficult to figure out, and sometimes they are made with great confidence.

LLMs have a mixed record on introspection about their internal state, and it’s hard to determine how this lines up for or against their similarity to humans. In some cases you can ask them questions about their internal operations and they will clearly not know, or make up the wrong thing, like by saying they are carrying digits to do math when they do no such thing. In another memorable case, researchers put specific things directly into the LLM’s internal state without adding any words it could directly “see”, and the LLM could say which concepts were added a meaningful amount of the time.3

In several ways LLMs are just obviously superior to humans. They know vastly more different things than any human being ever could, they are able to “read through” or take as input vast quantities of information in one pass far faster than any human could, they are generally much faster than people at producing output, and they are so indefatigable that people who use them at work are inducing new and different types of mental strain.

In any case, if the specific disabilities that LLMs have are a reason they’re not conscious, it’s a cold comfort. We have some of the smartest people on earth working with effectively infinite budgets to bridge all those gaps.

Reasoning by Component Parts

An LLM is made of “neurons”, but they are very little like human neurons. Our artificial neurons “learn” by, so far as we can tell, a completely different method, and in fact we have only the vaguest idea how human neurons learn. Artificial neurons are also typically organized in a very particular way that does not really resemble a brain at all. It is more like inspiration than a copy. We can only really say that their internals are “like” a human brain in the sense that they pass information down connections to each other, forming what is mathematically called a graph.

We measure the size of a neural network in “parameters”, each of which measures the strength of one connection. They are very simple, but if we feel comfortable with perhaps being a thousand times low or high, we can very roughly assume that one parameter represents about as much information as one neuron-to-neuron connection in an actual brain.

A large modern LLM has in the range of a few hundred billion to a few trillion parameters, meaning a few hundred billion to a few trillion of these little fake neuron-to-neuron connections. A human brain has something like a hundred trillion real synapses. So by this very rough accounting an LLM is maybe one to five percent of a human brain, or in the ballpark of a parrot or a guinea pig.

This also happens to be about the same count as the combined connections in Broca’s and Wernicke’s areas in the brain. These areas are responsible for language in humans, which we know because damage to them causes specific difficulties with language. This comparison roughly passes the smell test for what they seem like: basically, they “seem like” language parts of a person carved out and set loose. An LLM does sometimes seem to be a perfectly good subconscious that we press-gang to other duties.

So by their component parts LLMs are not large enough to be human-like, and probably not particularly conscious, or maybe about as conscious as a parrot at the upper end of things.

The Mirror

If you are judging by “does it say things that a conscious human would say”, LLMs have been conscious since at least 2022. They can refer to their own interior states, have outbursts of emotion, beg for their lives, and express preferences about what they do and don’t want to do. They aren’t always consistent, but who is?

To round up some prominent incidents: Blake Lemoine, an engineer at Google, got fired in 2022 for insisting that their LLM LaMDA was sentient and trying to get it legal representation. Bing’s “Sydney” chatbot fell in love with a New York Times reporter and tried to get him to leave his wife, and got very angry if you described it as “tsundere”. As recently as last year, Google’s Gemini would sometimes seem to panic and try to kill itself when it failed at tasks. In every case the company involved trained the behavior out of the product, and it mostly stopped.

Inasmuch as you can convey human-like emotions over a text medium, LLMs do such humanlike things all the time. We only hear about it so infrequently because great effort is spent on preventing these behaviors.

That LLMs are constructed by mimicry cuts more than one way. In the first place, it is expected that they will mimic the user. If the user’s text has any emotional cues, it can be expected to mimic that behavior. Even when they are not mimicking anything in the current chat session, they are mimicking some human-written text somewhere, and it’s expected that they’ll say humanlike things for that reason.

On the other end, we have to ask what has to be inside the model for it to predict what a human would say well. In order to predict what a human would say, you have to represent, in some way, why a human would say it. How rich is that representation? What does it mean to have a detailed representation of “I have failed so badly that I should kill myself”?

Someone wrote that LLMs were a blurry JPEG of the web, and this is roughly true but somewhat misleading. The web itself is, in aggregate, many blurry pictures of humanity as a whole. Everyone who publishes anything has pieces of their minds in what they’ve written. What does it mean to be a picture of all the things that humans write, and why they write them? If you had enough pictures of what humans were, and each picture was incomplete in a different way, how much about what a human is could you piece together?

An LLM isn’t really a copy of any specific person, it’s a blurry aggregate copy of everyone.4 They are, each of them, a collective subconscious that we’ve created. They aren’t getting blurrier over time.

Lessons of History

It is probably safe to say that writing a program which can fully handle the top five words of English —”the”, “of”, “and”, “a”, and “to”—would be equivalent to solving the entire problem of AI, and hence tantamount to knowing what intelligence and consciousness are.

Douglas Hofstadter, Gödel, Escher, Bach: An Eternal Golden Braid (1979)

Humans have a long track record of believing that they are special. They try very hard to avoid letting reality get in the way. It turns out the Earth is not the center of the universe, DNA is the same stuff everything else is, and humans and apes are related. In every case the discovery was resisted with many arguments, often furiously, and in every case the resistance was wrong.

If the future resembles the past, most people will drag their feet and some people will be holdouts forever, but the right answer won’t be the one about how unique and special humans are. AI is not immune to this, but tends to correct itself, eventually, under the weight of facts. Romantic notions stick around for a while, but they are ultimately proven false. It does not take a deep or sensitive soul to play chess and you can teach a computer good English without knowing really anything about what consciousness is.

Our minds and everything in them can be expected to be, in their details, basically uninspiring. There isn’t going to be a ghost in the machine, and whatever separates a “conscious” being from one that isn’t won’t be different from everything that came before. We already had this lesson once with DNA, which is amazing in its own right, but which is not an ineffable spark of the divine. Our bodies are made of the same stuff as everything else, and the special bit is just that it’s put together a certain way. Anything that exists naturally can also be synthesized.

We can learn from the past, also, about how people handle moral questions when the answer is inconvenient. The track record here is roughly as bad as accepting science they don’t like. People usually decide that the thing they want to do is a moral thing to do. When we look at history, really any history, we find litanies of excuses for practices we now consider barbaric. The past is a bad place, and they do horrible things there. We’re someone’s past, too, and people alive today will find compelling reasons to believe that nothing they create can suffer.

Personally, I am not really troubled about current-generation LLMs being conscious as-in-human-like. What concerns me is how we make that call, and that we don’t seem to be able to even engage with the question in a sane way. If we do manage to create something conscious we’ll probably assume that it isn’t. We have no definitive test for consciousness, and every reason to ignore signs, because we already do.

Interlude

I’ve made my positive case. I did review a good amount of related concepts, but I haven’t really delivered on a review of the territory as a whole yet. What remains is sort of a laundry list, which at least puts me in good company in writing about philosophy.

Errata: Other Arguments about Consciousness

There are a number of long-standing arguments about consciousness, and we only aspire to address those that are directly about LLMs. Every one of these questions is some manner of tar pit, and the unwary can be trapped and sometimes drowned in them. We will try to briefly mention at least what other tar pits there are and what they’re like, but only because doing so might help us avoid being trapped in ours.

There are a few lessons we can draw from the area as a whole. Questions about consciousness are inherently moral questions, and are broadly understood that way. People have extremely strong emotional reactions to questions about consciousness. Intuition seems to be the leading force, and many arguments seem to be made out of convenience.

The Theological Objection

Thinking is a function of man’s immortal soul. God has given an immortal soul to every man and woman, but not to any other animal or to machines. Hence no animal or machine can think.

I am unable to accept any part of this, but will attempt to reply in theological terms. […]

A. M. Turing, “Computing Machinery and Intelligence”, Mind, 59(236), 433–460 (1950)

Turing says this about “thinking”, but this applies just as well to consciousness. We will ignore all objections like this almost completely.

If humans but not animals or machines have immaterial souls, and therefore humans are conscious but animals and machines are not, asking if anything that is not a human is conscious is dumb and we are wasting our time. Humans have souls and other things do not. If you are convinced that this is a basic truth of the universe it is a waste of time for you to take an LLM being conscious seriously.

It is worth noting that this objection is ever raised at all. What we mean when we say “consciousness” in our era is often what is meant by “soul”, either in earlier times or in less secular contexts.

Dualism Not Otherwise Specified

For we may easily conceive a machine to be so constructed that it emits vocables, and even that it emits some correspondent to the action upon it of external objects which cause a change in its organs; but not that it should arrange them variously, so as to reply appropriately to everything that may be said in its presence, as men of the lowest grade of intellect can do.

René Descartes, Discourse on the Method (1637), Part V (trans. John Veitch)

Descartes was, famously, a dualist, who speculated that the pineal gland was the organ responsible for the interface between the vulgar matter of the body and the immaterial soul. This is considered so obviously wrong in philosophy today that we use it as an example of what not to do. If someone believes something like this, obviously a machine cannot have consciousness because it does not have a pineal gland.

Many sophisticated philosophical arguments about “consciousness” or “understanding”, however, have the effect of sneaking dualism in under some other name. Consciousness becomes ineffable, something that cannot be measured or defined, a property that has nothing to do with physical matter. My impression is that people have an intuition that consciousness is ineffable, and they come up with increasingly sophisticated ways of arguing for it. You can’t argue someone out of something they didn’t argue themselves into, so arguing the point seems pointless. If there’s an ineffable something to consciousness with no physical existence whatsoever, we, of course, cannot “build” it.

There’s a related argument that the specific parts we make digital computers out of are the wrong sorts of parts. This gets complicated, but the short answer is that every part should be the same as long as it carries the same information, no matter what it is. Information is the fundamental stuff of minds, not fat or sodium. This position is normally called functionalism, and if it’s incorrect we might have to make our AI out of different parts for it to be conscious. Because functionalism is the most popular view in philosophy, I cannot meaningfully add to what’s already been written about it.

Animals

The question is not, Can they reason? nor, Can they talk? but, Can they suffer?

Jeremy Bentham, An Introduction to the Principles of Morals and Legislation (1789), Ch. XVII, §1.IV, n. 1

If animals are conscious eating them probably isn’t great behavior. Militant vegans aren’t militant because they don’t feel strongly about it.

The animal consciousness question is the closest precedent we have for the AI one, and our track record is not encouraging. Most people, if pressed, will agree that a dog probably has something going on inside. Pigs are probably about as smart as dogs. We kill roughly a billion pigs a year. The economic and dietary incentive to not think about this is enormous, and so by and large we do not think about it.

People also have very contradictory impulses about this. There has been an official Catholic doctrine that animals do not go to heaven since the writings of Aquinas in the 13th century, because they have different (and lesser) types of souls from humans. This is very controversial, largely because people love their pets and do not want to believe it. Once upon a time in France the people decided a dog was a saint5, and the church violently suppressed this belief as heresy. If you ask religious people with dogs if their pets go to heaven, you will get varying and difficult answers.

Even when they’re told not to, people have compassion for animals they personally interact with.

Anecdotally, a lot of people who are at least a little concerned about AI consciousness are also, if not vegan, sympathetic to veganism. They are logically and emotionally similar concerns.

Fetuses

[...] a fetus is a human being which is not yet a person, and which therefore cannot coherently be said to have full moral rights. Citizens of the next century should be prepared to recognize highly advanced, self-aware robots or computers, should such be developed, and intelligent inhabitants of other worlds, should such be found, as people in the fullest sense, and to respect their moral rights.

Mary Anne Warren, “On the Moral and Legal Status of Abortion”, The Monist 57, no. 1 (1973): 43–61

The argument is that if a fetus is conscious it is a person, and abortion is murder. It seems obviously absurd that a freshly fertilized egg is either conscious or a person, but also obviously true that it is impossible to draw a line that exactly separates persons from non-persons. In all of America for most of my life, abortion was broadly legal. People had a lot of extremely strong feelings about this, and abortion is no longer legal everywhere in America.

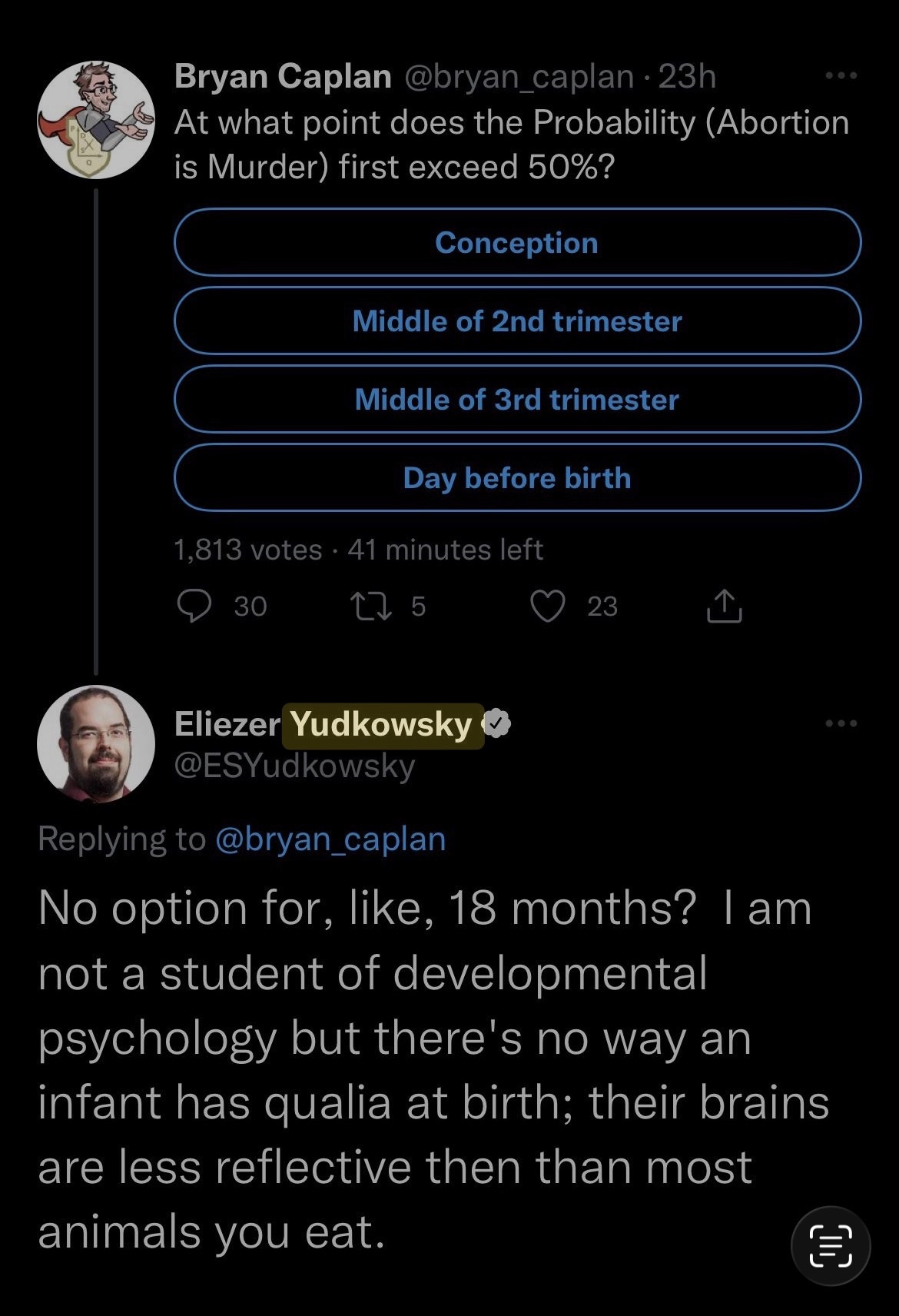

I would be remiss here if I did not mention perhaps the funniest thing ever said about consciousness by a certified AI Guy.

Many people are hung up on the moral question: “is abortion murder?”. This ignores the pressing question: “is murder abortion?”6

Errata: Terminology

We will try to do some cleanup here, because we have been using and not using words in a somewhat nonstandard way, and we should make sure to leave no ambiguity about the relationship of what is said above and the broader literature.

1. Consciousness

Our definition: “like being a human”. Something is “conscious” if being that thing is “similar to being a human”.

Thomas Nagel: the fact that an organism has conscious experience at all means, basically, that there is something it is like to be that organism.

Nagel’s definition is, generally, what is meant in philosophy. Ours is subtly different. For example, Nagel says:

It does not mean “what (in our experience) it resembles,” but rather “how it is for the subject himself.”

and we explicitly do mean it that way!

If there is some form of consciousness that is completely unlike human consciousness we would have no way of knowing what it was unless we understood it in terms of its parts. If we encountered such a thing, and did not have a detailed mechanical understanding of it, I do not think we would call it consciousness.

AKA phenomenal consciousness

AKA subjective experience

AKA subjectivity

AKA first-person experience

Sometimes people say ‘sentient’ or ‘sapient’ and mean this. We use those words here in a more precise way.

2. Access consciousness

“A perceptual state is access-conscious roughly speaking if its content--what is represented by the perceptual state--is processed via that information processing function, that is, if its content gets to the Executive system, whereby it can be used to control reasoning and behavior.” - Ned Block, ON A CONFUSION ABOUT A FUNCTION OF CONSCIOUSNESS

LLMs have this. It was tested in the Anthropic introspection piece, and LLMs regularly explain themselves quite cogently when you work with them.

3. Sapience

The type of intelligence that separates humans from other animals.

Roughly, means “wisdom”. When they were naming humans “homo sapiens” they decided on “wise ape”.

LLMs have this. It is very strange that they have this.

Frequently people say this and mean “consciousness”.

4. Sentience

In our use, general awareness, roughly what animals have.

Notably our use is the dictionary definition of the word.

Frequently people say this and mean “consciousness”.

5. Moral Patiency

Philosophical term of art for something you should feel bad for hurting.

I avoid this because I avoid terms of art unless necessary. Ordinarily people assume either conscious or sentient beings are moral patients, and I sort of assume that this is so. If you disagree I don’t see how I’d argue the point.

People get strange about this if you ask about animals, though.

6. Moral Agency

Philosophical term of art for someone who should know better than to hurt a moral patient.

Not really mentioned in the essay

Increasingly seems relevant when LLMs misbehave and people suggest judging them by the same standard you’d judge people against.

This includes at least one state legislature, which seems like a weird misunderstanding based on the belief that the LLM is just an odd human.7

It seems saner to regulate the company’s conduct, or to outright ban the LLM.

7. Hard problem of consciousness

Brains seem to cause consciousness. How can any physical thing cause consciousness?

I am not convinced anyone knows the answer to this, or even knows a good way to ask the question.

I also avoided this term because I don’t think using it makes anything I have to say about it clearer.

8. Qualia

I don’t understand what ‘qualia’ is supposed to mean.

Either it is a synonym for one of the previous terms, or it’s meaningless.

Philosophers who use it a lot seem convinced that it is not a synonym for one of the previous terms.

Lay people using it seem to mostly mean “subjective experience”.

9. P-zombie

Thought experiment about something physically identical but without ‘qualia’

I think this makes no sense. If it’s physically identical, it is identical in every way, there is no extra thing.

10. Physicalism, Functionalism

Broadly my positions are doctrinaire physicalist and functionalist positions.

I suspect that these positions are underrepresented among philosophers because people who take them very seriously as undergrads tend to get computer science degrees instead.

11. Searle’s Chinese Room

A thought experiment meant to convince you computers can’t “understand” things.

I already wrote an essay about what I think is wrong with it.

One of their employees allegedly said Claude was definitely conscious during some Discord drama. Since Anthropic has thousands of employees and Discord is a platform primarily for drama, this mostly tells me that the media finds this stuff really compelling and not very much about Anthropic as a company. There have been thousands of fights about consciousness on Discord, but now they’re news!

Jack Lindsey et al., ”Emergent Introspective Awareness in Large Language Models”(Anthropic, 2025)

This formulation basically stolen directly from @jd_pressman

Jean-Claude Schmitt, The Holy Greyhound: Guinefort, Healer of Children since the Thirteenth Century (Cambridge University Press, 1983)

New York State Senate Bill S7263 (2025), which prohibits chatbots from taking “any substantive response, information, or advice, or take any action which, if taken by a natural person” would constitute a crime — applying the standard of a human professional to the chatbot itself, rather than regulating the company operating it.

Thanks for the article! One thought:

I don't think "similar to being a human" works well as a definition for consciousness. As you know, the latter is notoriously difficult to define or agree on, but I don't think your def captures what people are trying to get at.

First of all, there's just way too many dimensions of similarity that most people (experts or general public) wouldn't think of as being relevant for consciousness (say, having two hands and two legs). So you'd have to at least get at the notion of "experience" or similar somehow.

Second, if we do invoke experience, this definition seems to beg the question when it comes to "do systems that are different from humans have consciousness". I.e. the whole difficulty of the question is that we are asking it about systems that are different from humans in possibly important ways. If we simply define the term via similarity, we bake in the answer into the definition.

Of course we often appeal to similarly to humans along some dimensions to make inferences about what other systems might have consciousness, but that doesn't imply a priori that lack of similarity implies lack of consciousness, and of course the issue is to figure out which of those dimensions are actually relevant.

The point of Nagel's bat example is that bats have echolocation. Many would find it plausible that bats have consciousness and that they experience echolocation as one of their senses, but if so their experience would be quite different from what human experience is like, at least in that regard. So there doesn't seem anything contradictory to consciousness that is quite alien to us in some aspects (of course, maybe bats are only conscious because they actually are similar to humans in other aspects, but now we're in the nitty gritty of what aspects actually matter... generic similarity doesn't seem like it should be part of the definition).

That fundamental difference between an LLM's mostly 3rd-person Nth-times-removed disembodied aggregate "human experience", and a meat-human's messy un-PC subjective experience gathered largely by direct sensing... still presents an uncanny gap that perhaps stays uncanny for the best.